Data Mesh is often presented as the modern answer to the limitations of centralized data architectures. Promising scalability, stronger business ownership, and acceleration of analytics and AI use cases, the model appeals to many international groups. However, beyond the apparent clarity of its principles, its implementation requires a profound organizational transformation, far beyond a simple technical architecture decision. This article offers a strategic and pragmatic perspective on Data Mesh: its origins, its real benefits, its frequently underestimated risks, and the concrete conditions required to turn it into a performance lever rather than a source of complexity.

.png)

Data Mesh has become, in just a few years, a central concept in discussions about modern data architectures. Presented as an alternative to traditional centralized models, it promises scalability, business ownership, and acceleration of analytics and AI use cases. On paper, the proposition is compelling. In the reality of large organizations, it is considerably more demanding.

This article offers a complete and pragmatic perspective on Data Mesh: its origin, foundational principles, theoretical benefits, operational limitations, and the real conditions for success.

The concept was introduced in 2019 by Zhamak Dehghani while she was working at ThoughtWorks. Her foundational article, published on the ThoughtWorks website under the title “How to Move Beyond a Monolithic Data Lake to a Distributed Data Mesh”, laid out the first principles of this new paradigm. She later expanded the framework in her book Data Mesh (O’Reilly, 2022).

Data Mesh emerged from a simple observation: centralized data architectures such as Data Lakes and Data Warehouses become bottlenecks in large enterprises. Central data teams accumulate requests, business knowledge is distant from implementation, and organizational scalability becomes constrained.

Data Mesh is built on four structural pillars:

The ambition is clear: distribute responsibility while maintaining coherence. Conceptually, the balance is elegant.

Data Mesh addresses several well-known challenges:

In technologically mature environments, certain positive case studies are frequently cited in conferences and ThoughtWorks publications. The theoretical benefits are substantial:

However, these cases remain the exception rather than the rule across the broader market.

The most underestimated factor is organizational.

Data Mesh is not about deploying a new technical architecture. It requires a transformation of the company’s operating model. Business departments, including historically non-technical ones such as marketing or finance, must integrate structured data capabilities, data product ownership roles, and quality and governance responsibilities.

This transformation impacts:

Without strong and sustained executive sponsorship, the transformation typically fails.

Several large organizations have experimented with Data Mesh and later reverted to centralized or hybrid models. Recurring causes include:

In many cases, departments prefer returning to a central team perceived as easier to manage.

The paradox becomes clear: a model designed to reduce complexity can increase it if poorly calibrated.

Data Mesh promotes decentralization. Yet it demands a higher level of discipline than centralized models.

When poorly implemented, it can lead to:

Federation works only when shared rules are rigorously defined and operationalized.

With the rise of AI, LLMs, and generative AI architectures, the topic becomes even more strategic.

AI systems require:

A mature Data Mesh can significantly accelerate AI strategies.

An immature one can amplify risks.

A realistic deployment requires:

In practice, many organizations converge toward hybrid models combining:

The “pure” model is rarely implemented in full.

Field experience shows that strictly applying the theoretical model is rarely appropriate.

Some organizations lack the necessary cultural and structural maturity. Others prefer to limit risk and move progressively. A pragmatic approach defines a clear strategic target while deploying incrementally: a pilot domain, a tested governance framework, progressive capability building.

In many contexts, adapting the recommendations is more effective than aiming for strict compliance with the original framework. The objective is not conceptual purity, but operational effectiveness.

Data Mesh is neither a myth nor a universal solution. It is an ambitious, structuring, and demanding framework.

On paper, the logic is coherent.

In practice, the transformation is deep, cultural, and organizational.

The key question is not “Should we adopt Data Mesh?”

The key question is “Which organizational model truly enables our data and AI strategy without generating uncontrolled complexity?”

Within Axel Douchin Consulting (www.douchinconsulting.com), I support large organizations in structuring data, cloud, and AI at scale, acting as a transition manager and strategic advisor.

The approach consists of:

The objective is not to impose a theoretical framework.

It is to build a robust data organization capable of sustainably supporting enterprise strategy and AI ambitions, while maintaining control over complexity and risk.

Data Mesh can be a powerful lever.

Provided it is treated as a strategic transformation, not merely as a technical architecture.

About the Author

Axel Douchin is a Cloud, Data, and Artificial Intelligence (AI) executive and interim CIO, CTO, and Chief Data Officer specializing in complex digital transformation programs. With more than 20 years of international experience—including leadership roles in global technology initiatives and work with Amazon Web Services—he helps organizations design and execute large-scale cloud migrations, enterprise data strategies, and AI-driven platforms. His work focuses on data governance, scalable cloud architectures, and pragmatic approaches to deploying AI in regulated and high-complexity environments.

Topics: Cloud Strategy · Data Governance · Enterprise Data Platforms · Artificial Intelligence · Digital Transformation

More insights on Cloud, Data, and AI strategy:

www.douchinconsulting.com

Expert analysis on data, cloud, and change management.

Europe can't afford to play catch-up. While others race to 3nm, we should leap directly to 2nm, just like Japan is doing with Rapidus. It’s bold, risky, and exactly what we need. In this article, I argue why Europe should go all-in on 2nm and what it would take to make it happen.

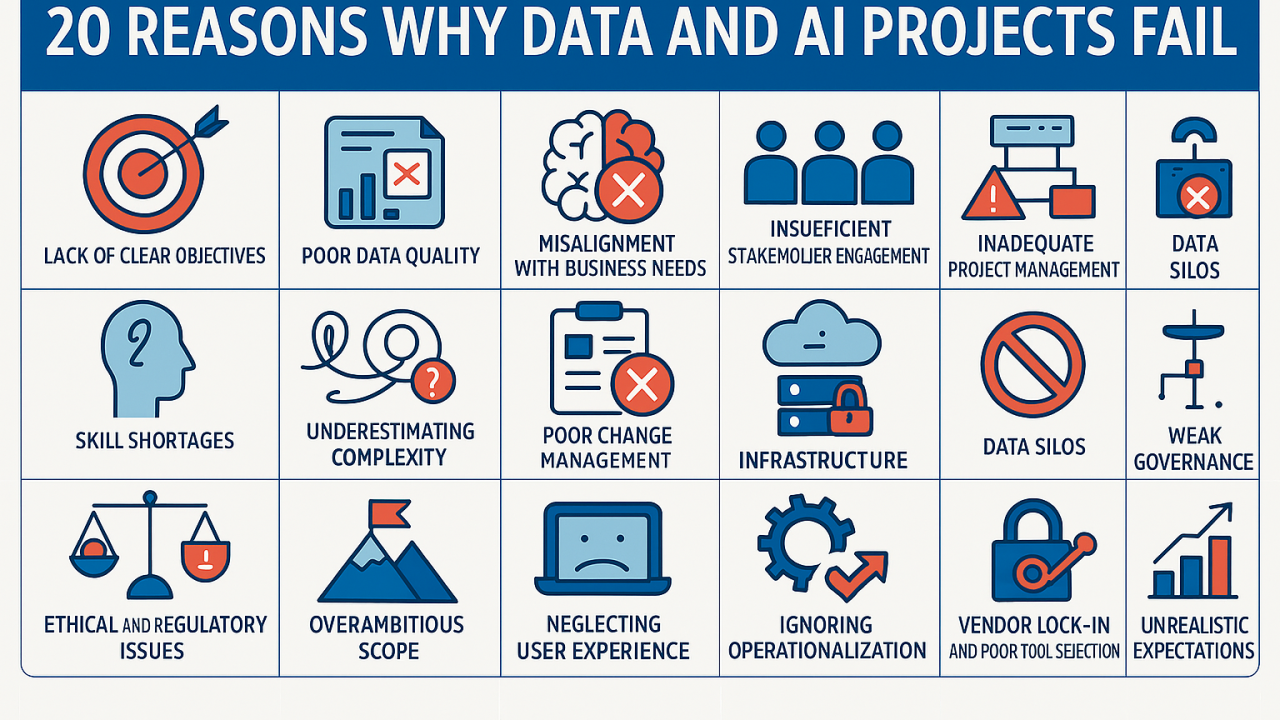

Avoid AI an Data failure, learn from other company's mistakes

Frustration has always been very high with BI solutions. breaking models and dashboards has always been an issue. It often required to prevent end users to create what ever they wanted for governance sake.

Expert guidance for seamless cloud and data transitions. Unlock value, ensure compliance, and lead with confidence.